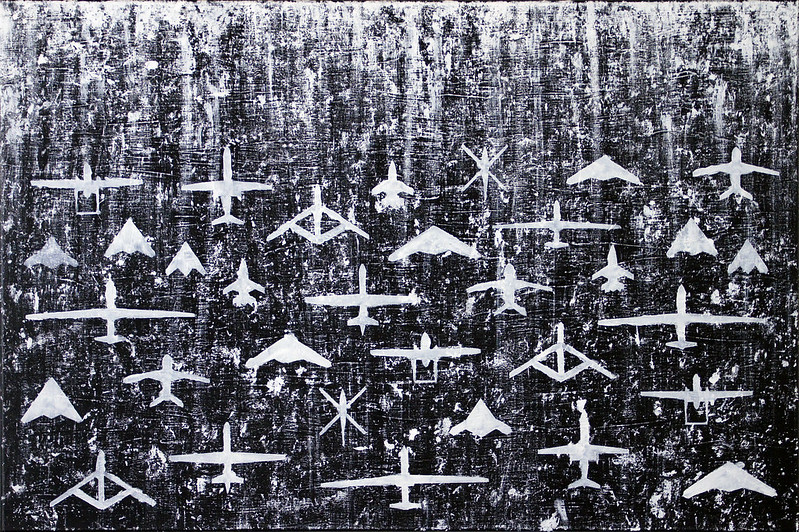

Photo Credit: Mike MacKenzie via flickr

This analysis summarizes and reflects on the following research: Suchman, L. (2020). Algorithmic warfare and the reinvention of accuracy. Critical Studies on Security, 8(2), 175-187.

Talking Points

- Far from improving accuracy and “situational awareness,” the use of automation and artificial intelligence (AI) technology in U.S. counterterrorism operations simply compounds problems that already exist around the criteria for determining who constitutes a “threat.”

- “Targets” are increasingly based on racial/ethnic stereotypes and questionable behavioral profiling where the “simple association of an individual by the surveillance apparatus with a category of targeted persons defined to be a threat is enough.”

- AI data requires processing to render it “actionable,” and, despite its inconclusiveness, this processed data is still used to make decisions on the location of U.S. drone strikes in countries like Iraq, Afghanistan, Yemen, Somalia, and Pakistan.

- AI programs like Project Maven raise serious questions about the criteria that mark particular individuals as “threats” and what living in declared and undeclared conflict zones subject to U.S. aerial strikes means for civilians whose normal, everyday activities could trigger an attack, if identified as “threatening.”

Summary

Project Maven was introduced to the media and U.S. general public in the summer of 2018 when several Google employees voiced concerns over the company’s contract to automate the labeling of images from U.S. military drones to determine “objects of interest” (including vehicles, buildings, and persons) with sparse details on its intended purpose. In solidarity, academic researchers supported Google employees’ concerns and added that “further automation…of the US drone program can only serve to worsen an operation that is already highly problematic, even arguably illegal and immoral under the laws and norms of armed conflict.” While Google decided not to renew the contract following its employees’ protests, the project’s contract was picked up and continued by a different company.

This story, and Project Maven more generally, typifies an intersection of critical security studies and technology studies that Lucy Suchman examines in research on automation and artificial intelligence technologies in U.S. counterterrorism strategies. Military analysts argue that the application of these relatively new technologies improve “situational awareness” by improving accuracy in the identification of threats and, as a result, targets, thereby “dissolving the fog of war.” However, Suchman argues that claims of improved accuracy are based on falsehoods: both that a weapon can actually become more precise in assessing and hitting the “target” and that the “target” is a legitimate one in the first place. She contends that no technological improvement in a weapon’s accuracy can absolve the military of the lack of clarity on who actually constitutes a threat, especially as threat determination is increasingly based on racial/ethnic stereotypes and questionable behavioral profiling. Further, and even more troubling, no evidence is required to determine threat; instead, the “simple association of an individual by the surveillance apparatus with a category of targeted persons defined to be a threat is enough.” These concerns are compounded—not resolved—through the introduction of artificial intelligence (AI), an emerging technology that attempts, and fails, to abstract data analysis from politics.

|

Situational awareness: “the instrumentalization of knowledge in the service of control over the combat zone…a mode of human cognition involving accurate perception of a surrounding environment.” |

In support of this critique, Suchman reviews literature problematizing the claim of accuracy in weapons systems and highlights how arguments for improved accuracy intersect with a situational awareness. Existing literature on automation in weapons systems, like long-range missiles or the Strategic Defense Initiative, focuses on the impossibility to test under realistic conditions and the lack of guarantee that a technological system will work in real-world conditions. Furthermore, although technological developments meant to improve accuracy are intended to augment situational awareness, this assertion is based on flawed assumptions about the existence of a clear and stable distinction between armed combatants and unarmed civilians in the first place. In fact, military actors, whether on the ground or through surveillance, are part of the context that they surveil—and as such are inserted in “ever more complex and convoluted apparatuses of recognition” with “[technologies] that are themselves implicated in generating the unfolding realities that they are posited to apprehend.” Military intelligence, seeking to fulfill the “mandate to produce targets,” reinforces a narrow, militarized worldview wherein the desire to use new AI technologies cannot possibly improve situational awareness.

AI entails the automated processing of huge amounts of “communications metadata,” including military intelligence data such as video surveillance from drones and open-source data from social media, and then requires human interpretation to create “actionable” information. (See CGP Grey’s publication below in Continued Reading for a basic review of how AI works.) Even though the data results are inconclusive, military analysts will attest to the decisiveness of this type of analysis, which is used to determine the location of U.S. air strikes for operations in Iraq, Afghanistan, Yemen, Somalia, and Pakistan. Yet, investigative evidence from U.S. air strikes in Pakistan from 2004 to 2015 does not support these claims of precision: of the 3,341 fatalities caused by these airstrikes, only 1.6% were identified as “high profile or high value targets,” 5.7% were children, 16% were (adult) civilians, and the vast majority (76.7%) of those deaths were categorized as “other.” (These numbers are sourced from the Bureau for Investigative Journalism; see below in Organizations.) Further, according to AI experts cited in the study, AI is extremely difficult to apply in understanding terrorism because terrorism is a rare event, unlike daily occurrences like traffic, from which AI can more easily learn observable patterns.

Initiatives like Project Maven stir up questions about the criteria for identifying “targeted persons” (for instance, what characteristics fit the label “Islamic State militants”?) and what it means for civilians living in declared and undeclared conflict zones subject to U.S. aerial strikes—what seemingly normal, everyday activities could be identified as threatening and therefore trigger an attack? The militarization of new technology and its subsequent dehumanizing effects on civilians must continue to be challenged by careful analysis that critiques assumptions of accuracy in the service of endless war.

Informing Practice

It is not surprising that the question of how artificial intelligence data is analyzed and made meaningful is a thorny one, especially considering recent controversies within Google’s own “ethical AI” team. Timnit Gebru was abruptly fired (along with others who supported her) from her position as co-lead of the ethical AI team at Google in what appeared to be retaliation for refusing to retract a paper that identified how AI data analytics could perpetuate bias in Google’s own search engine. This move called into question the credibility of AI research emanating not just from Google but from all big tech firms who regularly produce research papers. If AI can perpetuate and amplify bias, this emerging technology—an increasingly powerful tool for state surveillance—cannot be understood to be separate from the broader social and political context in which it is situated. In the context of war and the preparation for war, the application of AI to military activities should raise even greater alarm because perpetuating bias in this scenario is lethal to civilian populations under threat of covert U.S. drone strikes. This reality must heighten demands for civilian oversight of and accountability mechanisms for U.S. military operations globally and also call into question the legal authority by which military operations are conducted without congressional oversight.

The troubling implications of AI in military operations is not lost on AI researchers and professionals, 4,500 of whom have signed on to the campaign to stop killer robots according to the non-profit organization AI for Peace. The campaign calls on countries to legislate a ban on AI weapons through national laws and to create a new international treaty banning AI weapons and “establish[ing] the principle of meaningful human control over the use of force”; it further calls on all tech companies and organizations to pledge not to contribute to the development of AI weapons. Yet, it is equally important to draw attention to the potential that AI and other types of emerging technology hold for preventing violence and building peace. Technological innovations have included the tracking and countering of hate speech, models helping countries understand and act on COVID’s impact on marginalized communities, and early warning and response systems with regard to election violence.

The complex landscape of emerging technology in peace and security reveals a more fundamental truth about institutions of power. With incredibly high levels of defense spending and a prioritization of militarized domestic and international security in the U.S., it follows that new technology like AI is applied to enhance the nation’s capacity to create war and violence. Put more precisely: If we don’t invest in or plan for peace, then our technological innovations will not be developed with the goal of managing conflicts without violence and building peace. Technology is neutral—AI did not create racial, ethnic, gender, or other forms of bias—but its application is not. Without effort to transform institutions of power or contend with historical legacies that create inequities, new technological tools will only amplify and reinforce these problems. When it comes to military operations, new technology will only become more dangerous, unregulated, and lethal—and will only continue perpetuating the racial and ethnic biases present in the wars waged throughout U.S. history. [KC]

Continued Reading (And Watching)

Metz, C. (2021, March 15). Who is making sure that A.I. machines aren’t racist? The New York Times. Retrieved on April 8, 2021, from https://www.nytimes.com/2021/03/15/technology/artificial-intelligence-google-bias.html

Boulamwini, J. (2017, March 29). How I’m fighting bias in algorithm. TED. Retrieved on April 8, 2021, from https://www.youtube.com/watch?v=UG_X_7g63rY

CGP Grey. (2017, December 18). How machines learn. Retrieved on April 8, 2021, from https://www.youtube.com/watch?v=R9OHn5ZF4Uo

Piper, K. (2021, April 8). The future of AI is being shaped right now. How should policymakers respond? Vox. Retrieved on April 8, 2021, from https://www.vox.com/future-perfect/22321435/future-of-ai-shaped-us-china-policy-response

Carnegie Endowment for International Peace. (N.d.). AI global surveillance technology. Retrieved on April 12, 2021, from https://carnegieendowment.org/publications/interactive/ai-surveillance

Peace Science Digest. (2019, July 22). How feelings make military checkpoints even more dangerous for civilians in Iraq. Retried on April 8, 2021, from https://peacesciencedigest.org/how-feelings-make-military-checkpoints-even-more-dangerous-for-civilians-in-iraq/

Discussion Questions

- How does the militarization of new technology have the potential to reinforce systems of oppression?

- What problems could AI help to solve if applied to peacebuilding and violence prevention?

- What meaningful policy measures could be suggested to prevent “killer robots”? Additionally, could a new international ban treaty prevent a new arms race with AI weaponry?

Organizations

PeaceTech Lab: https://www.peacetechlab.org

Bureau of Investigative Journalism, Drone Warfare: https://www.thebureauinvestigates.com/projects/drone-war

Center for Civilians in Conflict: https://civiliansinconflict.org

AI for Peace: https://www.aiforpeace.org/

Campaign to Stop Killer Robots: https://www.stopkillerrobots.org/learn/

Airwars: https://airwars.org/

Key Words: artificial intelligence, technology, military, war, situational awareness, counterterrorism, drones